You have mastered Docker. Your applications run in containers, your docker-compose.yml files are battle-tested, and your development workflow is smooth. But now you are facing the inevitable question every growing team encounters: when should you migrate to Kubernetes, and how do you do it without breaking everything?

This guide is written for Docker users who are ready to take the next step. We will walk through the entire migration journey — from evaluating readiness to running your first production workloads on Kubernetes — with practical examples you can follow along with.

When Should You Migrate from Docker to Kubernetes?

Not every project needs Kubernetes. Before investing weeks of migration effort, honestly evaluate whether your situation calls for it:

You NEED Kubernetes if:

- You are running 10+ containers across multiple hosts

- You need automatic scaling based on traffic/load

- You require zero-downtime deployments with automated rollbacks

- You have multiple teams deploying different services independently

- You need self-healing — containers that automatically restart on failure

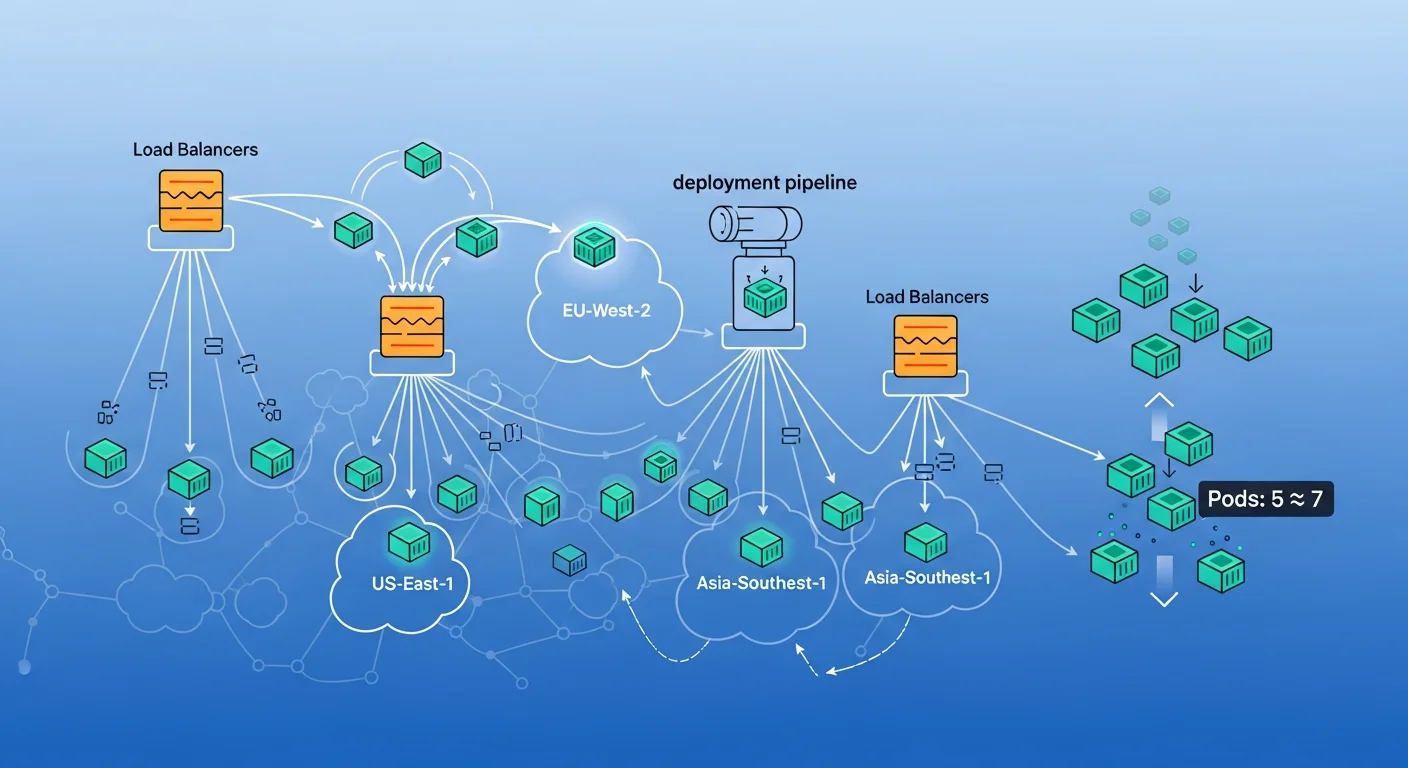

- You are running across multiple cloud providers or regions

Docker Compose is still fine if:

- You are running a single application on one or two servers

- Your team is small (1-3 developers)

- Traffic is predictable and does not require auto-scaling

- You can tolerate brief downtime during deployments

Step 1: Understanding the Architecture Mapping

The first step in migration is understanding how Docker Compose concepts map to Kubernetes resources. Here is your translation table:

| Docker Compose | Kubernetes | Purpose |

|---|---|---|

| service | Deployment + Service | Run and expose containers |

| volumes (named) | PersistentVolumeClaim | Persistent data storage |

| networks | NetworkPolicy | Service-to-service communication |

| ports | Service (NodePort/LoadBalancer) + Ingress | External access |

| environment | ConfigMap + Secret | Configuration management |

| depends_on | initContainers + readinessProbe | Startup ordering |

| restart: always | restartPolicy + livenessProbe | Self-healing |

| docker-compose.yml | Helm Chart | Templated deployment package |

Step 2: Preparing Your Docker Images for Kubernetes

Your existing Docker images might work, but they likely need optimization for Kubernetes. Here are the critical changes:

2.1 Health Check Endpoints

Kubernetes relies heavily on health checks to manage container lifecycle. Add explicit health and readiness endpoints to every service:

# Python Flask example

@app.route('/healthz')

def health():

return {'status': 'healthy'}, 200

@app.route('/readyz')

def ready():

# Check database connection

try:

db.session.execute(text('SELECT 1'))

return {'status': 'ready'}, 200

except Exception:

return {'status': 'not ready'}, 5032.2 Graceful Shutdown Handling

Kubernetes sends SIGTERM before killing pods. Your application must handle this signal to finish in-flight requests:

# Node.js example

const server = app.listen(3000);

process.on('SIGTERM', () => {

console.log('SIGTERM received, starting graceful shutdown');

server.close(() => {

// Close database connections

db.end().then(() => {

console.log('Shutdown complete');

process.exit(0);

});

});

// Force shutdown after 30 seconds

setTimeout(() => {

console.error('Forced shutdown after timeout');

process.exit(1);

}, 30000);

});2.3 Externalize All Configuration

In Kubernetes, configuration comes from ConfigMaps and Secrets — not .env files or hardcoded values. Update your apps to read from environment variables:

# Instead of:

DATABASE_URL=postgresql://user:pass@localhost:5432/mydb

# Use environment variables that K8s will inject:

import os

db_host = os.environ['DB_HOST']

db_port = os.environ.get('DB_PORT', '5432')

db_name = os.environ['DB_NAME']

db_user = os.environ['DB_USER']

db_pass = os.environ['DB_PASSWORD']Step 3: Converting Docker Compose to Kubernetes Manifests

Let us convert a real-world Docker Compose stack to Kubernetes. Here is our example — a typical web application with a PostgreSQL database and Redis cache:

Original docker-compose.yml

version: "3.8"

services:

webapp:

build: .

ports:

- "8080:3000"

environment:

- DB_HOST=postgres

- REDIS_URL=redis://redis:6379

- NODE_ENV=production

depends_on:

- postgres

- redis

restart: always

postgres:

image: postgres:16

volumes:

- pgdata:/var/lib/postgresql/data

environment:

- POSTGRES_DB=myapp

- POSTGRES_USER=appuser

- POSTGRES_PASSWORD=secretpassword

redis:

image: redis:7-alpine

volumes:

- redisdata:/data

volumes:

pgdata:

redisdata:Kubernetes Equivalent: Deployment for webapp

# webapp-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: webapp

labels:

app: webapp

spec:

replicas: 3 # Scale beyond what Compose offers!

selector:

matchLabels:

app: webapp

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0 # Zero downtime

template:

metadata:

labels:

app: webapp

spec:

containers:

- name: webapp

image: registry.example.com/webapp:1.2.0

ports:

- containerPort: 3000

env:

- name: DB_HOST

value: postgres-service

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: db-credentials

key: password

- name: REDIS_URL

value: redis://redis-service:6379

- name: NODE_ENV

value: production

resources:

requests:

cpu: 100m

memory: 128Mi

limits:

cpu: 500m

memory: 512Mi

livenessProbe:

httpGet:

path: /healthz

port: 3000

initialDelaySeconds: 10

periodSeconds: 15

readinessProbe:

httpGet:

path: /readyz

port: 3000

initialDelaySeconds: 5

periodSeconds: 5

initContainers:

- name: wait-for-db

image: busybox:1.36

command: ['sh', '-c',

'until nc -z postgres-service 5432; do sleep 2; done']Kubernetes: Service + Ingress for External Access

# webapp-service.yaml

apiVersion: v1

kind: Service

metadata:

name: webapp-service

spec:

selector:

app: webapp

ports:

- port: 80

targetPort: 3000

type: ClusterIP

---

# webapp-ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: webapp-ingress

annotations:

cert-manager.io/cluster-issuer: letsencrypt-prod

nginx.ingress.kubernetes.io/rate-limit: "100"

spec:

tls:

- hosts:

- app.example.com

secretName: webapp-tls

rules:

- host: app.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: webapp-service

port:

number: 80

Step 4: Persistent Storage in Kubernetes

This is where most Docker-to-Kubernetes migrations struggle. Docker volumes are simple; Kubernetes persistent storage requires understanding PersistentVolumes (PV), PersistentVolumeClaims (PVC), and StorageClasses.

PostgreSQL with Persistent Storage

# postgres-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: postgres-pvc

spec:

accessModes:

- ReadWriteOnce

storageClassName: standard # Or gp3 on AWS, premium-rwo on GCP

resources:

requests:

storage: 20Gi

---

# postgres-statefulset.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: postgres

spec:

serviceName: postgres-service

replicas: 1

selector:

matchLabels:

app: postgres

template:

metadata:

labels:

app: postgres

spec:

containers:

- name: postgres

image: postgres:16

ports:

- containerPort: 5432

env:

- name: POSTGRES_DB

value: myapp

- name: POSTGRES_USER

valueFrom:

secretKeyRef:

name: db-credentials

key: username

- name: POSTGRES_PASSWORD

valueFrom:

secretKeyRef:

name: db-credentials

key: password

- name: PGDATA

value: /var/lib/postgresql/data/pgdata

volumeMounts:

- name: postgres-storage

mountPath: /var/lib/postgresql/data

resources:

requests:

cpu: 250m

memory: 256Mi

limits:

cpu: "1"

memory: 1Gi

volumes:

- name: postgres-storage

persistentVolumeClaim:

claimName: postgres-pvcStep 5: Secrets Management

In Docker Compose, you might use .env files or hardcoded environment variables. In Kubernetes, use Secrets (and consider external secret managers for production):

# Create secrets imperatively (don't commit these to Git!)

kubectl create secret generic db-credentials \

--from-literal=username=appuser \

--from-literal=password=$(openssl rand -base64 32)

# For production, use External Secrets Operator

# to sync from AWS Secrets Manager, Vault, etc.

apiVersion: external-secrets.io/v1beta1

kind: ExternalSecret

metadata:

name: db-credentials

spec:

refreshInterval: 1h

secretStoreRef:

kind: ClusterSecretStore

name: aws-secrets-manager

target:

name: db-credentials

data:

- secretKey: password

remoteRef:

key: production/database

property: passwordStep 6: Packaging Everything with Helm

Helm is to Kubernetes what Docker Compose is to Docker — a way to package, template, and manage multi-resource deployments. Create a Helm chart for your application:

# Create the chart scaffold

helm create myapp

# Your chart structure:

myapp/

Chart.yaml

values.yaml # Default configuration

values-prod.yaml # Production overrides

templates/

deployment.yaml

service.yaml

ingress.yaml

configmap.yaml

secret.yaml

hpa.yaml # Horizontal Pod Autoscaler

pvc.yamlvalues.yaml (templated configuration)

# values.yaml

replicaCount: 3

image:

repository: registry.example.com/webapp

tag: "1.2.0"

pullPolicy: IfNotPresent

service:

type: ClusterIP

port: 80

ingress:

enabled: true

hostname: app.example.com

tls: true

resources:

requests:

cpu: 100m

memory: 128Mi

limits:

cpu: 500m

memory: 512Mi

autoscaling:

enabled: true

minReplicas: 3

maxReplicas: 20

targetCPUUtilization: 70

targetMemoryUtilization: 80

postgres:

enabled: true

storage: 20Gi

storageClass: standardDeploy with Helm

# Deploy to staging

helm install myapp ./myapp -f values.yaml -n staging

# Deploy to production with overrides

helm install myapp ./myapp -f values.yaml -f values-prod.yaml -n production

# Upgrade with zero downtime

helm upgrade myapp ./myapp -f values-prod.yaml -n production

# Rollback if something goes wrong

helm rollback myapp 1 -n productionStep 7: CI/CD Pipeline Integration

A proper CI/CD pipeline automates the entire build-test-deploy cycle. Here is a GitHub Actions workflow for Kubernetes deployments:

# .github/workflows/deploy.yml

name: Build and Deploy to Kubernetes

on:

push:

branches: [main]

jobs:

build-and-deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Build and push Docker image

run: |

docker build -t registry.example.com/webapp:${{ github.sha }} .

docker push registry.example.com/webapp:${{ github.sha }}

- name: Deploy to Kubernetes

run: |

helm upgrade --install myapp ./helm/myapp \

--set image.tag=${{ github.sha }} \

--set replicaCount=3 \

-f helm/values-prod.yaml \

-n production \

--wait --timeout 5m

- name: Verify deployment

run: |

kubectl rollout status deployment/webapp -n production

# Run smoke tests

curl -sf https://app.example.com/healthz || exit 1Step 8: Monitoring Your Kubernetes Cluster

Kubernetes provides much richer observability than Docker Compose. Set up the essential monitoring stack:

# Install Prometheus + Grafana stack

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

helm install monitoring prometheus-community/kube-prometheus-stack \

-n monitoring --create-namespace \

--set grafana.adminPassword=your-secure-password

# Key metrics to monitor:

# - Pod CPU/memory usage vs. requests/limits

# - HTTP response times (p50, p95, p99)

# - Error rates (5xx responses)

# - Pod restart counts (indicates crashes)

# - Persistent volume usage

# - Node resource utilizationCommon Migration Pitfalls to Avoid

- Not setting resource limits — Without limits, a single pod can consume all node resources and crash everything

- Missing health checks — Without readiness probes, Kubernetes sends traffic to containers that are not ready

- Using latest tag — Always use specific image tags for reproducible deployments

- Ignoring pod disruption budgets — Without PDBs, node maintenance can take down all replicas simultaneously

- Storing state in containers — Any data not in a PVC will be lost when pods restart

- Not configuring Horizontal Pod Autoscaler — Manual scaling defeats half the purpose of Kubernetes

Frequently Asked Questions

Can I use Docker Compose and Kubernetes together?

Yes. Many teams use Docker Compose for local development and Kubernetes for staging/production. Tools like Skaffold and Tilt bridge the gap by automatically syncing your local code with a Kubernetes cluster during development.

How much does Kubernetes cost compared to Docker Compose?

Kubernetes itself is free and open source. The cost difference comes from infrastructure: a Kubernetes cluster needs at least 3 nodes (for high availability), while Docker Compose can run on a single server. Managed Kubernetes services (EKS, GKE, AKS) add $70-150/month for the control plane. However, the auto-scaling capability often reduces overall costs by eliminating over-provisioning.

Should I use a managed Kubernetes service or self-host?

For most teams, use a managed service (AWS EKS, Google GKE, or Azure AKS). Self-hosting Kubernetes is a full-time job. Managed services handle control plane upgrades, etcd backups, and security patches. Self-hosting only makes sense if you have strict data sovereignty requirements or a dedicated platform engineering team.

How do I migrate my database without downtime?

Use a blue-green migration strategy: (1) Set up the new database in Kubernetes with replication from the old one. (2) Once in sync, update the application to point to the new database. (3) Verify, then decommission the old database. For PostgreSQL, tools like pg_logical or Patroni make this straightforward.

What about Docker Swarm — is it a simpler alternative to Kubernetes?

Docker Swarm is simpler but has a much smaller ecosystem and community. As of 2026, the industry has overwhelmingly chosen Kubernetes as the standard. New features, tooling, and cloud provider integrations are built for Kubernetes first. We recommend investing in Kubernetes knowledge for long-term career growth. See our book Docker Swarm: Container Orchestration for a comparison.

Recommended Resources

Master the Docker-to-Kubernetes journey with these comprehensive guides:

- Docker Fundamentals — Solidify your Docker foundation before migrating

- Kubernetes Fundamentals — Learn core K8s concepts from scratch

- Microservices with Docker and Kubernetes — Architecture patterns for distributed systems

- Docker Compose & Multi-Container Applications — Optimize your Compose setup before migration

- Kubernetes for Production: Scaling & Monitoring — Production-grade K8s operations

- Kubernetes Networking & Service Mesh — Deep dive into K8s networking